On February 16, 2026, the Maryland General Assembly provided a significant update regarding pending legislation focused on the implementation of artificial intelligence within the state's public school system. While the bill primarily addresses educational standards, the legislative move signals a broader shift toward regulated AI usage across all professional sectors in the United States. For legal professionals, this highlights the necessity of choosing the right technical architecture when deploying transparent legal AI solutions to ensure compliance and accuracy.

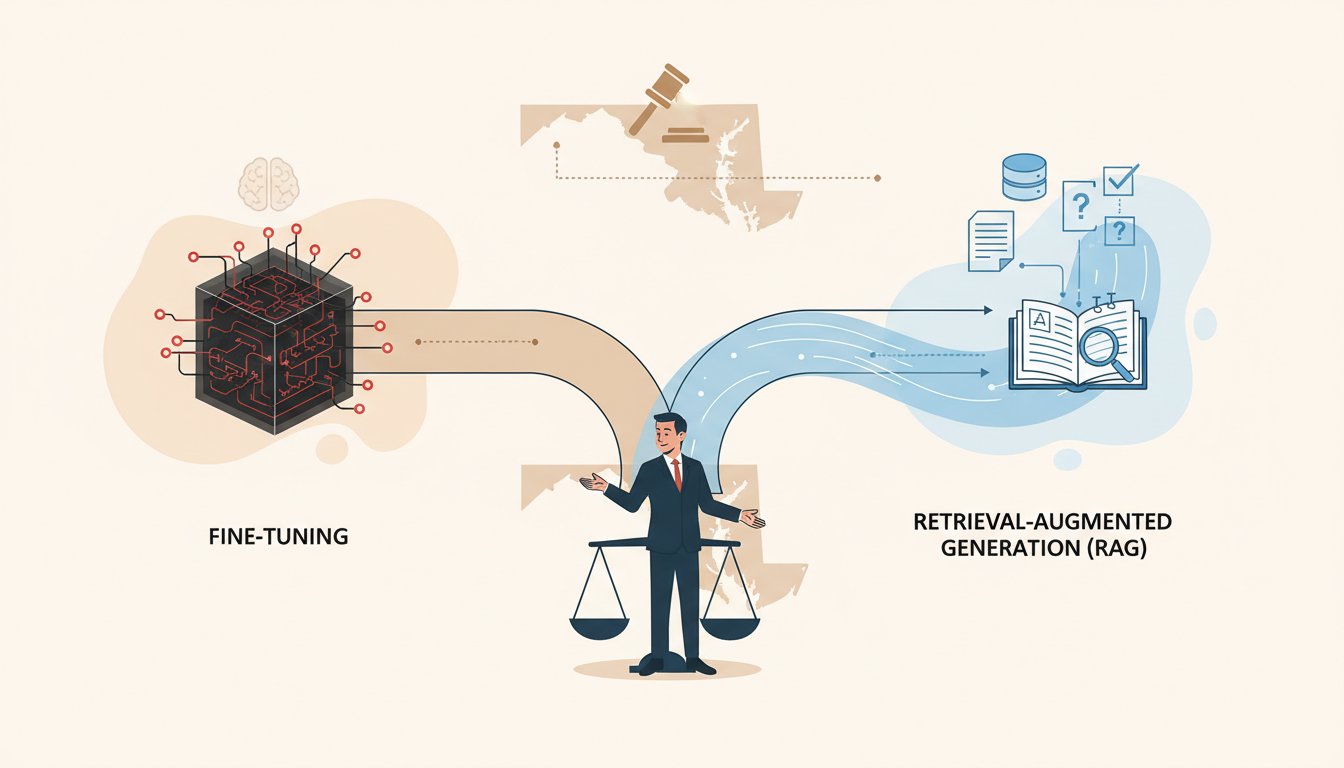

The Choice Between RAG and Fine-Tuning

As law firms look to adopt generative AI, the debate often centers on RAG vs fine-tuning for law firms. Fine-tuning involves retraining a model on a specific legal dataset to adjust its internal parameters. While fine-tuning LLMs for legal applications can help the model learn specific terminology or stylistic nuances, it often struggles with real-time data updates and can lead to the "black box" problem where the reasoning behind an output is unclear.

In contrast, Retrieval-Augmented Generation (RAG) allows a model to look up relevant documents from a secure database before generating a response. This method is often preferred for legal work because it provides a clear audit trail. Law firms can explore secure and private legal AI services to implement these systems without exposing sensitive client data to public models.

Technical Considerations for Legal Accuracy

The Maryland legislative update emphasizes the need for oversight and accuracy in AI-driven decision-making. In a legal context, accuracy is paramount. One of the primary advantages of RAG is its ability to ground model responses in verified facts. Attorneys should understand how RAG helps reduce hallucinations, which are a common risk when models rely solely on their training data rather than specific, retrieved documents.

Key benefits of RAG in a law firm setting include:

- Enhanced transparency by citing specific internal or external sources.

- Lower computational costs compared to frequent fine-tuning.

- Better handling of dynamic information, such as changing case law or legislative updates like the recent Maryland bill.

Implementing an AI Governance Framework

As state governments begin to formalize AI standards, law firms must move beyond experimental use toward structured deployment. Establishing an AI governance framework for law firms is an essential step in managing the ethical and operational risks associated with these technologies. Such a framework should dictate how data is ingested, who has access, and how the model's outputs are verified by human counsel.

Furthermore, privacy remains a top concern for firms handling privileged information. When evaluating vendors, it is critical to investigate the underlying infrastructure, defining what a private LLM truly means in terms of data isolation and security protocols. For more information on policy-driven AI adoption, firms can reference establishing an AI governance framework for law firms to ensure they remain ahead of state and federal mandates.

Conclusion

The legislative update from Maryland serves as a reminder that AI regulation is maturing. For legal practitioners, the technical choice between RAG and fine-tuning is not merely a matter of performance, but one of risk management and transparency. By prioritizing RAG-based systems and robust governance, firms can leverage the power of AI while maintaining the high standards of accuracy and confidentiality required by the legal profession.