Navigating the Legal Ethics of Generative AI in Modern Practice

The integration of artificial intelligence into the legal profession has transformed how attorneys handle document review, legal research, and draft preparation. However, as these tools become more prevalent, maintaining professional standards requires a deep understanding of the legal ethics of generative AI. For U.S. law firms, the intersection of technological efficiency and ethical obligation is primarily governed by the American Bar Association (ABA) Model Rules of Professional Conduct, which serve as a foundation for state-level regulations.

Read More

Implementing a Law Firm AI Policy Template for Ethical Practice

As legal professionals navigate the rapid integration of advanced automation, the need for a standardized law firm AI policy template has become central to maintaining professional responsibility. US law firms are increasingly required to demonstrate that their use of technology aligns with existing duties of competence, confidentiality, and supervision. Establishing a clear set of guidelines ensures that both attorneys and support staff understand the parameters of acceptable use when handling client data.

Read More

Integrating Google’s Antitrust Remedies into an AI Governance Framework for Law Firms

On March 4, 2026, Google unveiled a significant proposal to revamp its Android app ecosystem to resolve long-standing antitrust claims. According to reports from Bloomberg Law, this move is designed to lower barriers for third-party app distribution and foster a more competitive landscape for rival AI assistants. For legal professionals, this shift underscores the necessity of maintaining a robust foundational comprehensive AI governance policy to manage evolving platform regulations.

Read More

The Accountability Imperative: How to Safely Automate Contract Review Process Amid Rising AI Oversight

As of March 2026, the legal industry is facing a critical juncture regarding the intersection of artificial intelligence, attorney-client privilege, and regulatory enforcement. Recent developments in both federal courts and regulatory agencies have signaled that the era of informal AI experimentation is ending, replaced by a strict accountability imperative. For law firms and in-house counsel, the challenge is to find ways to automate contract review process without compromising the foundational protections of the legal profession.

Read More

Vietnam’s New AI Law and the Impact on Your Legal Document Generation System

Vietnam’s Law on Artificial Intelligence officially entered into force on March 1, 2026, establishing a pioneering regulatory framework in Southeast Asia. While centered in Vietnam, the statute carries significant extraterritorial weight, impacting any international provider or developer whose artificial intelligence tools interact with Vietnamese users or data. U.S.-based legal departments must now evaluate how their current legal document generation system and broader technical infrastructure align with these new cross-border transparency and risk management standards.

Read More

The Impact of Federal Anthropic Restrictions on Transparent Legal AI

On February 28, 2026, the landscape of federal procurement and artificial intelligence changed significantly as the United States Department of Defense (DoD) designated Anthropic as a supply-chain risk to national security. This move, followed by a directive from President Donald Trump, effectively bars federal agencies and their contractors from conducting commercial activity with Anthropic. For legal professionals and government contractors, this development necessitates an immediate review of existing tech stacks and procurement compliance protocols.

Read More

FTC Guidance on COPPA Age Verification and the Need for Legal Prompt Engineering Services

On February 25, 2026, the Federal Trade Commission (FTC) issued a significant policy statement aimed at incentivizing the use of age-verification technologies to protect children online. This move signals a shift in the enforcement posture regarding the Children’s Online Privacy Protection Act (COPPA), specifically for operators of general-audience and mixed-audience websites. The Commission has established a conditional non-enforcement position for operators that collect personal information solely for the purpose of determining a user’s age, provided they meet strict data-handling requirements.

Read More

OpenAI Victory and the Evolving Standards for Open Source Legal AI Models

On February 24, 2026, a U.S. federal court in California dismissed a high-profile trade secrets and employee poaching lawsuit filed by xAI against OpenAI. This ruling serves as a significant benchmark for the legal industry, particularly for counsel advising technology firms on intellectual property and talent acquisition. While the court granted xAI leave to amend its complaint, the initial dismissal highlights the rigorous pleading standards required to hold a corporate entity liable for the alleged misappropriation of proprietary code by former employees.

Read More

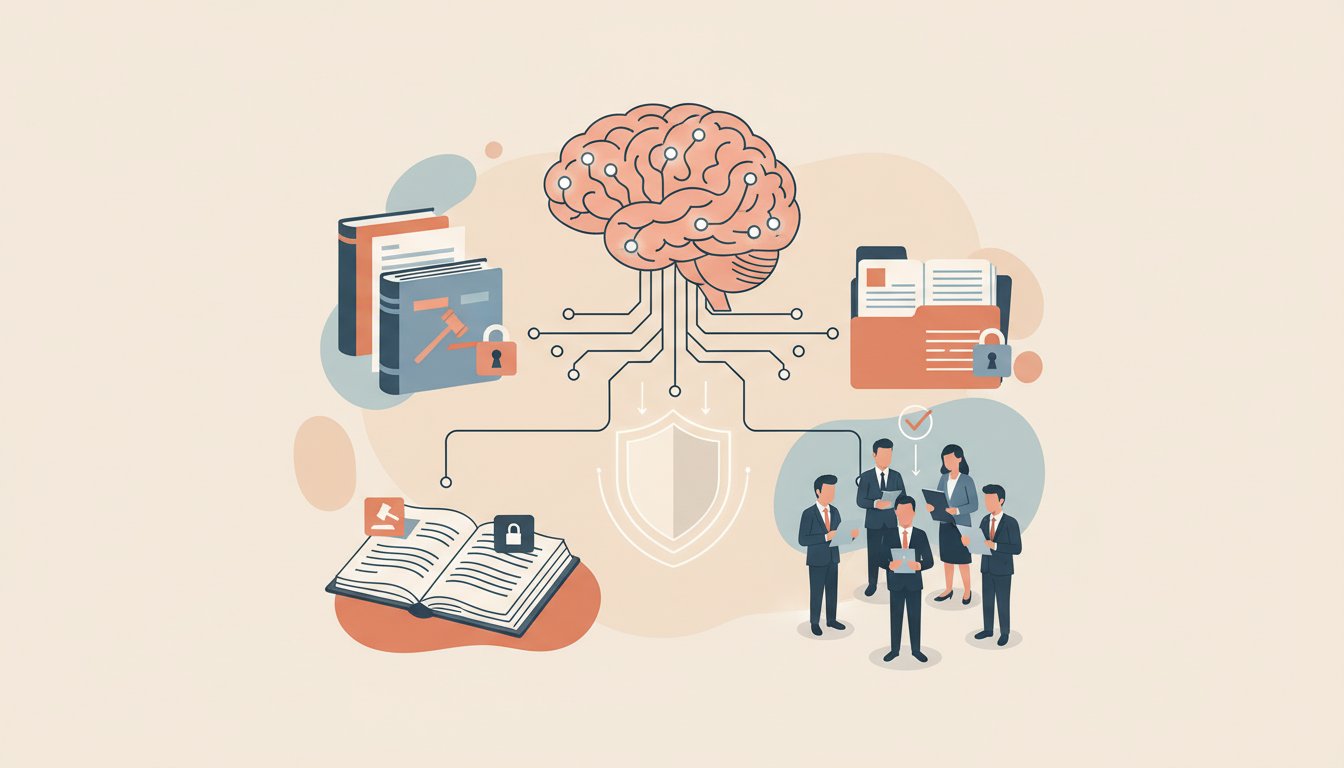

Key Considerations for On-Premise Legal AI Deployment

As legal departments and law firms integrate generative artificial intelligence into their workflows, the architecture of these systems has become a primary concern for IT and compliance officers. Choosing an on-premise legal AI deployment allows organizations to retain full physical and digital control over their proprietary data and client communications. This model is increasingly favored by firms that must satisfy stringent security protocols that standard cloud-based solutions may not provide.

Read More

Implementing a Private LLM for Law Firms to Protect Client Confidentiality

As legal practices increasingly adopt generative artificial intelligence to streamline workflows, the priority remains the protection of attorney-client privilege and sensitive data. Traditional cloud-based AI models often raise concerns regarding data leakage and third-party access. To address these challenges, many practices are exploring a private LLM for law firms to ensure that all data processing remains within a controlled environment.

Read More

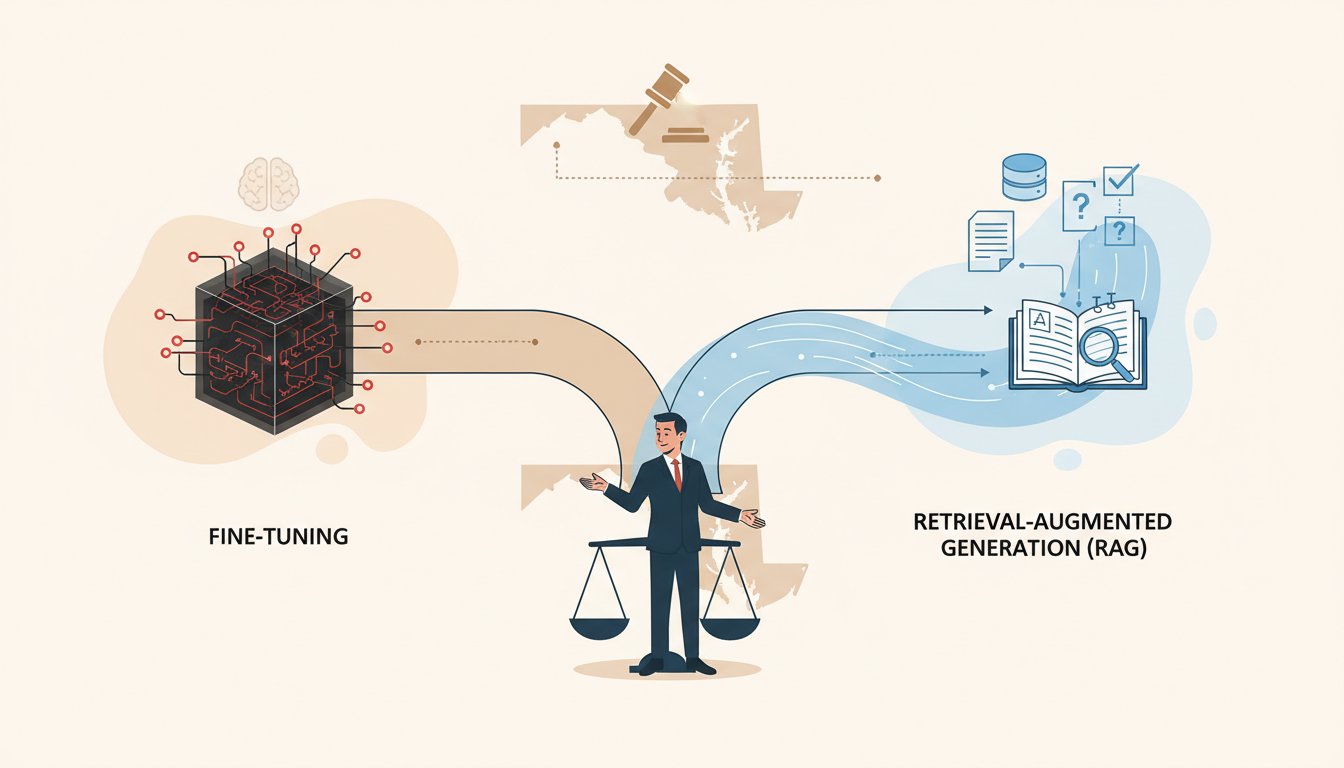

Understanding RAG vs Fine-Tuning for Law Firms Following Maryland AI Legislative Updates

On February 16, 2026, the Maryland General Assembly provided a significant update regarding pending legislation focused on the implementation of artificial intelligence within the state's public school system. While the bill primarily addresses educational standards, the legislative move signals a broader shift toward regulated AI usage across all professional sectors in the United States. For legal professionals, this highlights the necessity of choosing the right technical architecture when deploying transparent legal AI solutions to ensure compliance and accuracy.

Read More

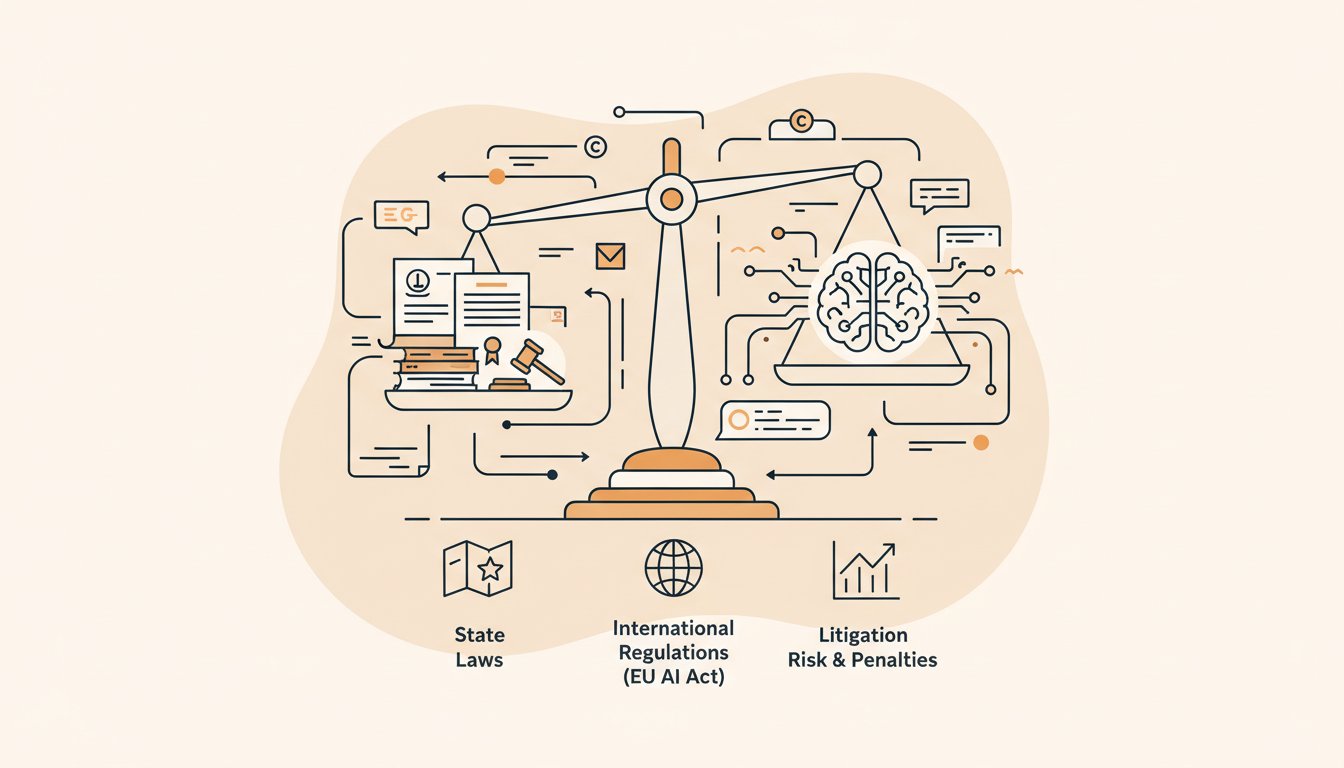

Navigating EU AI Act Enforcement with Private RAG Architecture

As of mid-February 2026, the global legal landscape for artificial intelligence has transitioned from theoretical frameworks into a phase of active enforcement. While many organizations previously focused on policy development, recent regulatory actions in the European Union signal that the time for practical compliance has arrived. For U.S. law firms and corporate legal departments, these developments necessitate a more robust secure AI implementation legal strategy to manage cross-border risks and ensure data integrity.

Read More

Federal Preemption and the Utah AI Transparency Act: Navigating the Need for a Vector Database for Legal Search

A recent development in the regulatory landscape for artificial intelligence has highlighted a growing conflict between federal policy and state-level legislative efforts. In February 2026, reports surfaced that the White House Office of Intergovernmental Affairs issued a formal communication expressing categorical opposition to Utah’s House Bill 286, also known as the Artificial Intelligence Transparency Act. This intervention signals a significant shift in how the federal executive branch may use preemption strategies to manage the patchwork of emerging state AI laws.

Read More

How RAG for Law Firms Improves Technical Accuracy and Data Security

The integration of generative artificial intelligence into the legal sector has shifted from experimental use to a core operational requirement. However, standard large language models (LLMs) often struggle with specific legal contexts and data privacy requirements. To address these limitations, many organizations are turning to retrieval augmented generation for legal documents. This architectural approach allows firms to connect their private data repositories to an AI model, ensuring that the generated output is grounded in verified, internal facts rather than general training data.

Read More

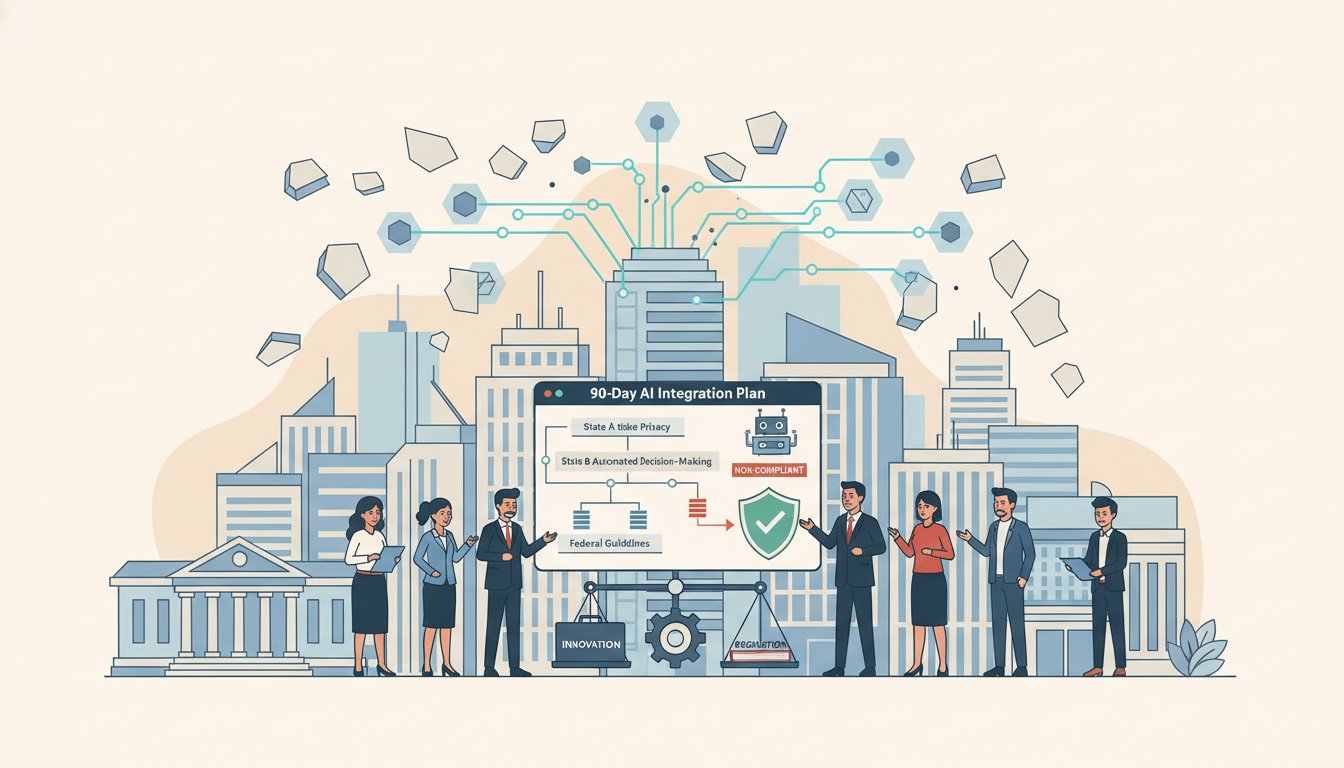

Maximizing AI ROI for Law Firms: Navigating the 2026 Regulatory Landscape

As we move through the first quarter of 2026, the legal industry is witnessing a significant shift from experimental artificial intelligence adoption to structured, policy-driven implementation. For U.S. law firms and corporate legal departments, understanding the global regulatory pulse is essential to maintaining a competitive edge and ensuring that technology investments yield a high return. Recent updates from international policy groups and domestic judicial discussions suggest that the standards for responsible AI use are hardening into formal expectations.

Read More

Developing an Effective AI Strategy for Mid-Sized Law Firms Amid Shifting State Regulations

As state-level artificial intelligence regulations continue to proliferate across the United States, legal organizations face a complex compliance landscape. Recent reports indicate that the rapid introduction of state AI rules could potentially leave technology leaders with systems that are functionally unusable if they fail to align with emerging statutory requirements. For legal practices, navigating these mandates is no longer just a technical hurdle but a critical operational necessity.

Read More

How a Fractional Chief AI Officer for Law Firms Addresses Training Data Litigation Risks

Recent investigative reporting has shed light on the aggressive methods AI developers use to acquire training data, creating a new landscape of litigation risk and discovery obligations. For legal organizations, the revelation that AI startups have engaged in large-scale scanning and destruction of physical books underscores the need for expert guidance. Engaging a fractional AI officer law firm can help partners navigate the technical and legal complexities of dataset provenance and copyright exposure.

Read More

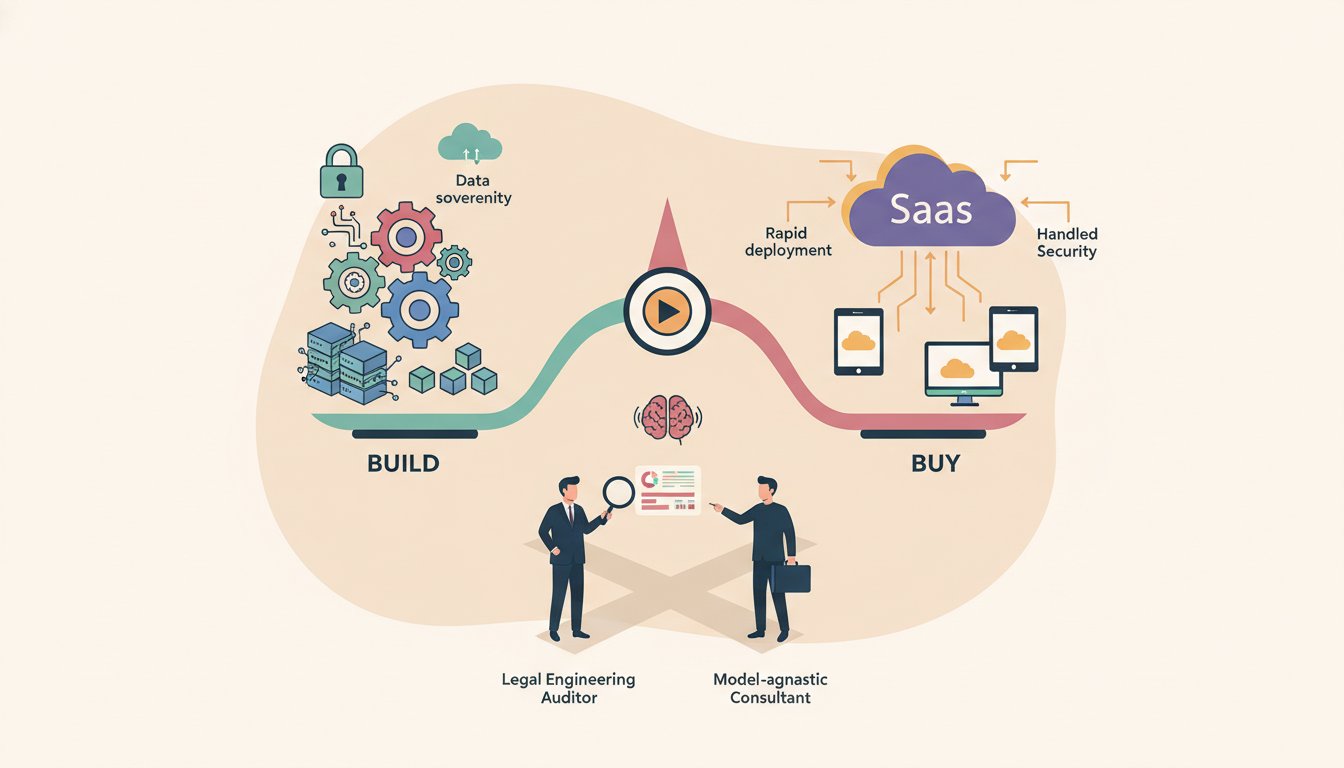

Evaluating the Build vs Buy Legal AI Framework for Modern Law Firms

Legal departments and law firms are increasingly facing a critical strategic crossroad regarding their technological infrastructure. The decision to build vs buy legal AI involves a complex assessment of long-term scalability, data security, and immediate operational utility. As generative AI technology matures in early 2026, the industry has moved beyond general-purpose tools toward specialized applications that handle sensitive privileged information.

Read More

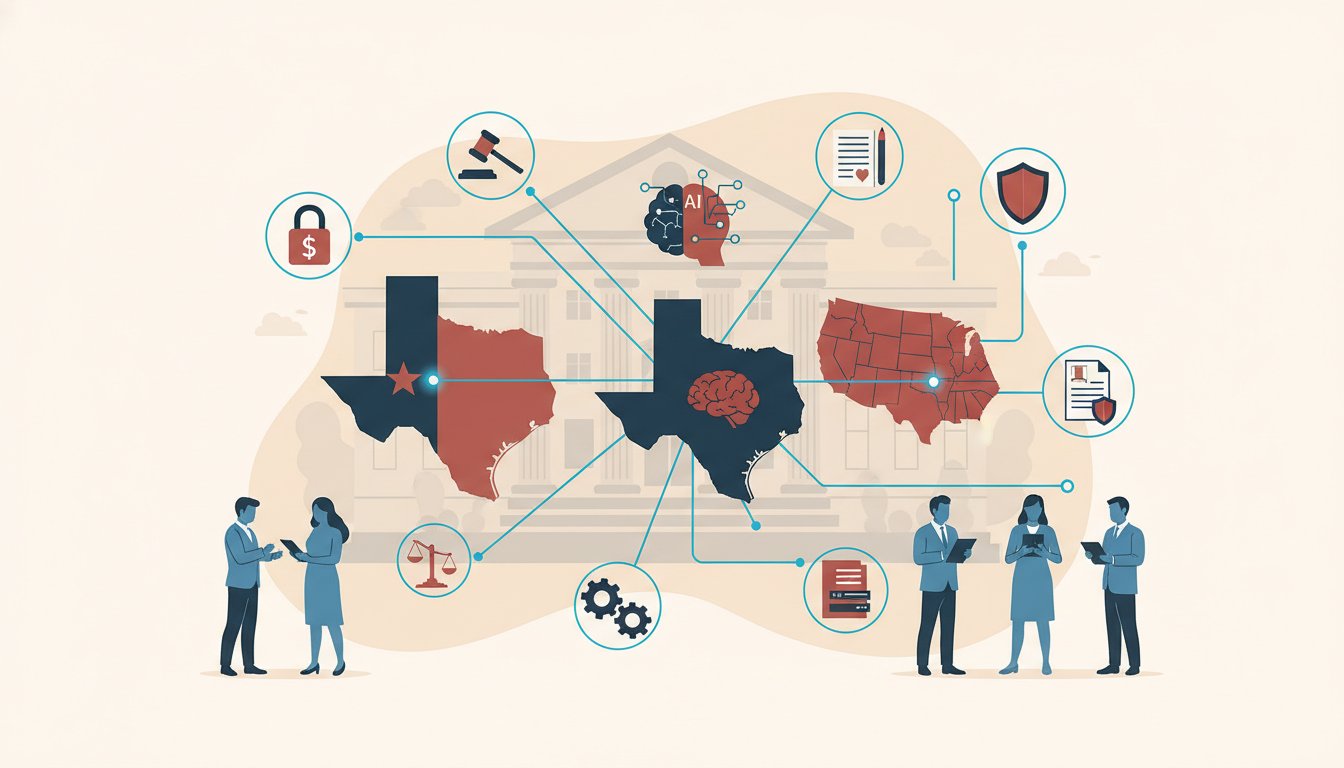

The Role of Custom Legal AI Development in the Face of New State Regulations

As of early February 2026, the regulatory landscape for artificial intelligence in the United States is shifting from theoretical debate to active enforcement and implementation. Recent industry summaries indicate that state-level activity is now a primary driver of compliance obligations for law firms and their corporate clients. With several state AI statutes and reporting requirements taking effect, the need for custom legal AI development that prioritizes transparency and auditability has become a practical necessity for legal departments.

Read More

The Strategic Value of a Legal Engineering Consultant in Modern Practice

The intersection of law and technology has reached a critical juncture. As legal technology moves from basic automation to sophisticated algorithmic decision-making, law firms and corporate legal departments face the challenge of integrating these tools without compromising ethical standards or operational efficiency. Engaging a legal engineering consultant has become a necessity for organizations looking to navigate the complexities of digital transformation while maintaining a competitive edge in a high-stakes market.

Read More

2026 Regulatory Deadlines and AI Copyright: A Practical Framework for Legal Teams

As of early 2026, the legal landscape surrounding artificial intelligence has shifted from theoretical risk to immediate enforcement. A report published on January 27, 2026, titled "The $1.5 Billion Reckoning," highlights a critical turning point for law firms and corporate legal departments. The convergence of state-level mandates, international transparency requirements, and high-value copyright litigation has made the provenance of training data a central pillar of corporate defensibility.

Read More

Maximizing Litigation Efficiency Through Advanced Legal Technology

The legal industry is currently navigating a significant transition as practitioners seek to balance traditional advocacy with modern efficiency. The integration of legal AI software is no longer a peripheral consideration for mid-sized and large firms; it has become a central component of competitive practice. By leveraging these tools, attorneys are better equipped to manage the increasing volume of digital evidence and the complexities of modern discovery.

Read More

Navigating the Evolving State AI Regulatory Landscape

The regulatory environment for artificial intelligence in the United States is currently undergoing a period of significant flux as state-level statutes begin to take effect. As legal professionals and in-house counsel look toward the compliance requirements of 2026, the focus has shifted toward specific state mandates, most notably Colorado’s S.B. 205 and various measures in Illinois. These legislative developments are creating a new suite of obligations for employers and the legal teams that advise them.

Read More

The Integration of Artificial Intelligence in Modern Litigation

The legal industry is currently undergoing a significant transformation as technological advancements reshape how firms handle complex caseloads. For practitioners in the United States, the adoption of legal AI software has moved from a niche interest to a central component of practice management and trial preparation. As the volume of digital evidence grows, traditional manual methods of review and drafting are increasingly being supplemented by automated systems designed to enhance accuracy and speed.

Read More

CoreWeave Securities Class Action: Key Deadlines and Implications for AI Infrastructure

A securities class action lawsuit has been filed against CoreWeave, Inc. (NASDAQ: CRWV), a specialized provider of cloud and GPU infrastructure for artificial intelligence applications. The action, captioned Masaitis v. CoreWeave, Inc., No. 2:26-cv-00355, is currently pending in the United States District Court for the District of New Jersey. The lawsuit follows allegations that the company made material misrepresentations regarding its operational capabilities and the status of its data center developments.

Read More

The Growing Risk of AI-Fabricated Case Law in Court Filings

A recent report published on January 24, 2026, has highlighted a critical operational and ethical challenge for the legal profession: the emergence of entirely fabricated legal authorities generated by artificial intelligence. According to the reporting, generative AI systems are now producing fictional cases and citations that have successfully bypassed initial screenings to enter real court submissions. This phenomenon, often referred to as hallucination, presents immediate malpractice risks for law firms and corporate legal departments globally.

Read More

China Proposes New Regulatory Framework for Anthropomorphic AI

On January 25, 2026, the Cyberspace Administration of China (CAC) concluded its public consultation on the "Interim Measures for the Administration of Anthropomorphic AI Interaction Services." Originally published for comment on December 27, 2025, the draft establishes a prescriptive regulatory regime for artificial intelligence systems designed to mimic human personality or provide emotional companionship. For U.S.-based law firms and multi-national corporations, these rules represent a significant expansion of the global AI compliance landscape.

Read More

Technical Limitations in Single-Embedding AI: Implications for Legal Research and eDiscovery

A recent technical study conducted by researchers at Google and Johns Hopkins University has identified structural limitations in single-embedding AI retrievers, a finding with significant implications for the legal industry. As reported on January 23, 2026, the study demonstrates that as databases grow in size, the common approach of mapping documents and queries to a single vector each becomes increasingly unreliable. This creates a provable risk for high-recall tasks where capturing every relevant document combination is essential.

Read More